Linear Transformation On Xвђђvector For Textвђђindependent Speaker

Linear Transformation On Xвђђvector For Textвђђindependent Speaker In this letter, we aim to obtain the linear transformation parameters on x vectors. fig. 1 denotes the whole process of the proposed vector model.we first train the parallel factor analysis (fa) model with background i vectors and x vectors, and then take out the linear transformation parameters for x vectors as shown in the left part of fig. 1. While in the on line process as shown in the right part of fig. 1, we consider x vector as the input of the linear transformation parameters ux. the transformed x vector, that is, xl vector, can be expressed as: fxl = l−1 t. x fx s−1 x fx − mx. where the posterior covariance is given by. (12) 1 −1 t.

Linear Transformation On Xвђђvector For Textвђђindependent Speaker Total variability model based i vector and deep neural network based embedding x vector are both widely used for text independent speaker verification. in this letter, a novel model is proposed, which can contain information of both i vector and x vector by using parallel factor analysis. Left part: training the linear transformation parameters θx based on background data. right part: obtaining the xl‐vector during the on‐line process t‐sne visualisation of different systems. A linear transformation.proof. this is because, for another vector w ∈ rn and. jv(u w) = projv(u) projv(w. a. dprojv(cu) = c (projv(u)) .2. the point of such projections is that any vector written uni. other one perpendicular to v: = projv(u) (u − projv(u)) . it is easy to check tha. 1 v2, 2v2 − 3v1, v1. This page titled 5.3: properties of linear transformations is shared under a cc by 4.0 license and was authored, remixed, and or curated by ken kuttler (lyryx) via source content that was edited to the style and standards of the libretexts platform. let \ (t: \mathbb {r}^n \mapsto \mathbb {r}^m\) be a linear transformation.

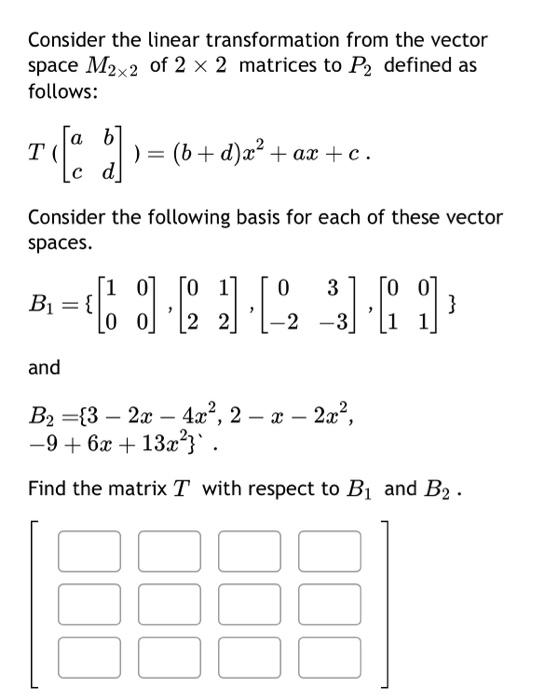

Solved Consider The Linear Transformation From The Vector Chegg A linear transformation.proof. this is because, for another vector w ∈ rn and. jv(u w) = projv(u) projv(w. a. dprojv(cu) = c (projv(u)) .2. the point of such projections is that any vector written uni. other one perpendicular to v: = projv(u) (u − projv(u)) . it is easy to check tha. 1 v2, 2v2 − 3v1, v1. This page titled 5.3: properties of linear transformations is shared under a cc by 4.0 license and was authored, remixed, and or curated by ken kuttler (lyryx) via source content that was edited to the style and standards of the libretexts platform. let \ (t: \mathbb {r}^n \mapsto \mathbb {r}^m\) be a linear transformation. 7. linear transformations ifv andw are vector spaces, a function t :v →w is a rule that assigns to each vector v inv a uniquely determined vector t(v)in w. as mentioned in section 2.2, two functions s :v →w and t :v →w are equal if s(v)=t(v)for every v in v. a function t : v →w is called a linear transformation if. All of the linear transformations we’ve discussed above can be described in terms of matrices. in a sense, linear transformations are an abstract description of multiplication by a matrix, as in the following example. example 3: t(v) = av given a matrix a, define t(v) = av. this is a linear transformation: a(v w) = a(v) a(w) and a(cv.

Solved Consider The Linear Transformation From The Vector Chegg 7. linear transformations ifv andw are vector spaces, a function t :v →w is a rule that assigns to each vector v inv a uniquely determined vector t(v)in w. as mentioned in section 2.2, two functions s :v →w and t :v →w are equal if s(v)=t(v)for every v in v. a function t : v →w is called a linear transformation if. All of the linear transformations we’ve discussed above can be described in terms of matrices. in a sense, linear transformations are an abstract description of multiplication by a matrix, as in the following example. example 3: t(v) = av given a matrix a, define t(v) = av. this is a linear transformation: a(v w) = a(v) a(w) and a(cv.

Comments are closed.